Automated visual inspection: use cases, examples & implementation tips

April 11, 2024

- Home

- Computer vision

- Automated visual inspection

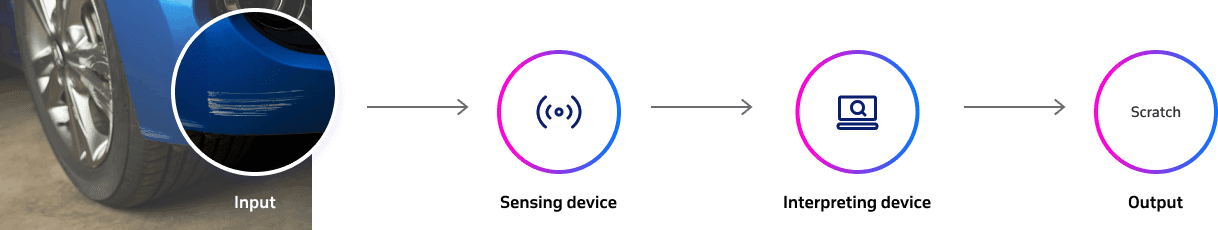

Automated visual inspection uses сomputer vision to analyze images or videos of products and detect defects, anomalies, or quality issues without human intervention. Implemented into assembly lines and production floors, AVI enhances quality control and efficiency in manufacturing processes.

Conventionally, manufacturers employ a large number of workers to manually inspect each item coming out of the assembly line for defects. However, despite in-depth guides and sufficient training, humans still make errors due to distraction or fatigue. Moreover, the production capacity will always be capped by the number of inspection specialists, which hinders scalability.

This is how computer vision software has made its way into manufacturing plants. With automated visual inspection (AVI) systems in place, manufacturers can achieve high product quality while ensuring maximum production capacity.

Table of contents

How automated visual inspection works

To understand the strengths of an automated visual inspection (AVI) system, let’s compare it to its predecessor, an automated optical inspection (AOI) system. AOI systems use machine vision technology and high-resolution optical cameras that capture visual information. This data is then compared to the template (an image of a particular non-defective item in perfect condition) for anomaly detection. However, even a slight change in color or lighting can confuse technologies used in AOI and lead to false positives, hindering the automation potential of the whole system. Besides, AOI systems often struggle to differentiate between permissible cosmetic and functional defects.

Manufacturers can overcome these limitations by augmenting existing AOI systems with machine learning.

Using machine learning-based technologies in AOI, companies don’t have to configure the system each time a new variable is added but can instead source all the relevant information from a large dataset of historical inspection data and autonomously understand patterns for defect classification.

Thus, tolerance to variation and environmental anomalies is one of the most important differences between AOI and AVI. Powered by machine learning, automated visual inspection systems make decisions by not only comparing it to the reference but also understanding the contents of a captured image. This significantly expands the range of defects an AVI system can inspect and increases the accuracy and reliability of inspection results.

Looking to implement an automated visual inspection solution?

AVI for manufacturing across industries

Automated visual inspection systems are widely used for defect identification in industries where adaptability, scalability, and precision are paramount.

Aerospace

When it comes to quality control, aerospace is one of the most regulated industries in the world, where compliance and human lives are all at stake. Automated visual inspection systems can significantly streamline end-product quality control by detecting defects during assembly, increasing the efficiency of aerospace manufacturing processes.

AutoInspect

AutoInspect is a robot-based optical system developed by 3D.aero that uses an AI-powered inspection system and industrial robotics for smart defect classification. Besides increased accuracy and reliability, 3D.aero reports that a full aero-engine combustion chamber can be examined in less than four hours, which is 80% faster than the manual inspection process. Interestingly, 3D.aero doesn’t let its artificial intelligence algorithm automatically improve itself because safety is paramount in such a high-stakes industry. Currently, new training data is added only after a human expert’s approval.

Image title: AutoInspect AI and robotics system

Image source: 3d-aero.com

Automotive

In such a large-scale industry as automotive, even slight improvements in operational efficiency can bring a significant competitive advantage. By moving from manual to automated visual inspection, automotive manufacturers can streamline their production processes while ensuring adherence to stringent quality and regulatory standards.

For example, AVI can streamline weld inspection for frame integrity, check the uniformity of tire tread patterns, and verify the integrity of airbag installations to ensure compliance with safety standards using video object recognition. Such solutions utilize advanced imaging and ML algorithms to accurately and reliably detect defects.

Volvo & UVeye

Since 2020, Volvo Cars has been using a computer vision-based Atlas quality inspection system developed by UVeye. At the end of the assembly line, each vehicle is inspected by more than 20 computer vision-powered cameras installed in an aluminum tunnel. Each camera takes hundreds of images per second, allowing the AI algorithm to assess the surface quality in detail. The system is more efficient and accurate than conventional manual inspection methods, detecting from 10% to 40% more defects, including scratches, dents, and component alignment anomalies. The system can detect even the tiniest defects, measuring 0.2 millimeters in size.

Medical devices

Medical device manufacturing is closely associated with strict regulations. For example, in the US and Europe, respective regulatory institutions require a Unique Device Identifier (UDI) to track medical devices through the supply chain. Medical equipment is often marked with chemically processed DPM text, which can only be deciphered by deep learning-enabled automated visual inspection systems.

By employing sophisticated deep learning models, AVI technology excels in inspecting life-saving devices such as insulin pens, syringes and other instruments. The technology meticulously examines each component for defects, from the precision of needle tips to the secure fit of injector plungers, ensuring every product complies with the FDA and other standards. Due to the great variance of complex building parts of medical devices, automated visual inspection solutions with deep learning models at their core are perfectly suited to ensure that the product is safe to use.

Dovideq Medical Systems

Dovideq Medical Systems, a Dutch organization that manufactures measuring instruments for minimally invasive surgery, developed a LightControl automated system for inspecting rigid endoscopes. Endoscope manufacturers don’t tolerate any quality compromises since poorly manufactured devices can lead to serious injuries and misdiagnoses. LightControl uses IDS cameras to measure six parameters and a neural network to verify that the endoscope condition is flawless during production and maintenance.

Pharmaceuticals

Pharmaceutical manufacturers can implement AVI systems for pill inspection. Before packaging, human experts manually inspect pills to ensure the product is free from surface defects and has correct labeling, color, and shape. A machine vision system can be easily tricked by pills’ reflective surfaces, which often make them look damaged. This is why the industry is turning to complex AVI systems that can accurately identify and classify defects not present in initial training sets.

Stevanato Group

Stevanato Group produces inspection solutions for various pharmaceutical products, including ampoules, vials, syringes, and bottles. The company uses high-performance CMOS cameras, advanced illumination, and other advanced techniques to improve the inspection of different containers.

Heavily reliant on a deep learning algorithm, Stevanato Group ensures minimal false rejection rates. For example, recently, Stevanato Group developed an AVI machine for inspecting ampoules with sodium chloride and vials containing a freeze-dried anti-rabies vaccine. For their Central American client, they installed a system to inspect turbid suspension in vials with vaccines for children that uses advanced trajectory algorithms and line scan cameras.

AVI system components & their implementation tips

An AVI system consists of sensing devices and processing software, so companies need to select the appropriate hardware and software technologies for the task. To build an optimal visual inspection system, manufacturers should consider lighting conditions, defect classification complexity, object size, and other factors.

Software

Machine learning algorithms

Machine learning algorithms are most suitable for cases when the types of defects are well-defined and the variance of defects is comparatively high.

Rule-based visual comparison software

Rule-based visual comparison software is effective for use cases with stable and predictable conditions and consistent item defects. For example, in automotive manufacturing, when verifying the uniformity of tire tread patterns, the system chooses between two options: either the pattern perfectly aligns with the blueprint or it doesn’t.

Statistical analysis software

Statistical analysis software is often used to track complex quality trends over time. For example, the system can analyze images over time to detect variations in paint thickness or texture. If the software detects a trend of increasing variance in paint thickness, this could indicate a problem in the painting process, such as equipment wear or incorrect paint formulation mixing.

Hardware

Cameras

High-resolution cameras are crucial for capturing as many details from images as possible. The camera choice mostly depends on the size of the objects and production line speed.

Sensors

Sensors are used to capture information and parameters that are not visually apparent. These can include 3D sensors for measuring object depth, LIDAR for precise distance mapping, or infrared sensors for detecting heat levels.

Lightning

Proper lighting is essential to ensure that the cameras can capture clear, high-quality images. The type of lighting to install depends on the inspection environment and object characteristics. For example, shiny metal parts often require diffuse lightning to minimize reflections that could hide cosmetic defects, while a textile pattern needs bright light to highlight its texture.

Image processing hardware

Deep learning requires above-average processing power to handle large amounts of data captured by cameras and sensors. Therefore, powerful graphic processing units (GPUs) and dedicated digital signal processors (DSPs) are essential for real-time analysis of visual data.

Our computer vision services & solutions

Itransition experts can develop various AI-driven systems that process visual data and help companies automate and improve quality control in healthcare, logistics, manufacturing, retail, and other industries.

Computer vision consulting

Itransition provides comprehensive computer vision consulting and implementation services to help companies integrate automated visual inspection and other CV-powered solutions into their processes. From ideation to maintenance and support, our experienced team guides you through the entire project lifecycle.

Defect detection & surface inspection

We build specialized solutions for detecting defects and anomalies on products’ surfaces to streamline quality control for industries like automotive, electronics, and consumer goods. Our systems analyze images using bespoke deep learning models to identify scratches, dents, discolorations, and other imperfections.

Image & video analytics

We build advanced image and video analytics software to help companies analyze visual content faster and get more in-depth insights. This is especially useful for assessing consumer sentiment in real-time, analyzing product defects, and enabling visual inspection automation.

Image segmentation

We develop image processing and segmentation solutions that break down visual content into specific objects, which is useful for counting vehicles and people in crowds and segmenting CT scans.

Need a reliable AVI implementation partner?

Transforming quality control with AVI

Humans will always be inherently worse at performing routine, daunting, and uninspiring inspection tasks than machines. At the same time, machines are more than capable of 24/7 inspection while achieving a speed and accuracy that is impossible for humans. In many ways, AI-powered quality control facilitates healthy competition between manufacturing organizations and allows them to create more products without compromising their quality.

Implementing automated visual inspection systems and computer vision in manufacturing processes is a no-brainer in the majority of cases. Decision-making mostly comes down to the technology selection and the choice of the production line stage. If you are looking to improve production efficiency, reduce operational costs, and improve product quality in your manufacturing business, Itransition can help you develop a tailored AVI system for your particular niche and use case.

Case study

A shoppable video platform for AiBUY

Find out how Itransition’s dedicated team helped AiBUY release their innovative machine learning-driven shoppable video platform.

Insights

Computer vision in manufacturing: 9 use cases, examples, and best practices

Learn how to leverage computer vision in manufacturing and explore its use cases, adoption challenges, and implementation guidelines.

Insights

Machine learning for anomaly detection: a technical overview

Explore 11 use cases, types, pay-offs, and best practices of machine learning for anomaly detection.

Insights

Machine learning in logistics: technology breakdown & 10 use cases

Find out how machine learning is transforming logistics and supply chain, including its top use cases, benefits, technologies used, and implementation tips.

Insights

Machine learning in manufacturing: key applications, examples & adoption guidelines

Learn how machine learning can help manufacturers to improve operational efficiency, discover real-life examples, and learn when and how to implement it.