A mobile platform for media content creation

Itransition provided technology research, created a proof of concept, and developed iOS and Android mobile apps for media content creation, helping the customer get Series B funding.

Table of contents

Context

Our customer, a European startup, came up with an idea for a mobile audio and video editing app that would enable media creation, viewing, and distribution. Aimed at music artists and producers, the app would enable them to become audio/video content creators, publishers, and distributors regardless of their experience and background. And due to the solution’s social network capabilities, artists could be discovered and their content could be reused, gaining popularity and monetization.

The customer decided to start with the development of audio editing functionality to test this hypothesis as they considered it would be more important for the app’s target audience. They wanted to begin with real-time audio processing to promote the product and expand the circle of stakeholders. Later on, video editing functionality was added to the project’s scope.

The customer needed a technology partner for research and development, so they reached out to us based on a reference from Itransition’s client. Considering our expertise in developing custom mobile apps and the media and entertainment domain, they decided to hire us for the project.

Solution

Discovery phase & POC delivery

Audio features for the iOS app

We suggested creating a PoC of the audio functionality for iOS because the app’s idea involved the integration of emerging technologies and the target audience’s needs were not clear and iOS was most suitable to test out both. In only six weeks, our team performed business analysis, documented the customer’s requirements, carried out technology research, and delivered the PoC.

Most features could be implemented using existing libraries with occasional finetuning, but our team had to research the autotune implementation and chose AudioKit as the most suitable library.

After the discovery phase, we created a PoC with the key features and intended look and feel. The implemented features included voice autotune, noise cancellation, changing tone quality, and such echo and high or low voice effects. The customer evaluated the POC and authorized the development of iOS video editing functionality as well as the full Android app version.

Disclaimer: According to the non-disclosure agreement that we signed, we cannot reveal the screenshots of the real system. For this case study, we created similar screenshots to give the reader an idea of the solution.

Main development phase

Audio content management features for the iOS app

While AudioKit was sufficient for the PoC, we switched to the Superpowered library with C++ and Swift for the real application. We also added features for recording, authorization, and video tuning with masks integrated via the Banuba API. In addition to that, the customer’s designer prepared JSON files for animations, which we downloaded and connected with the help of the Lottie SDK.

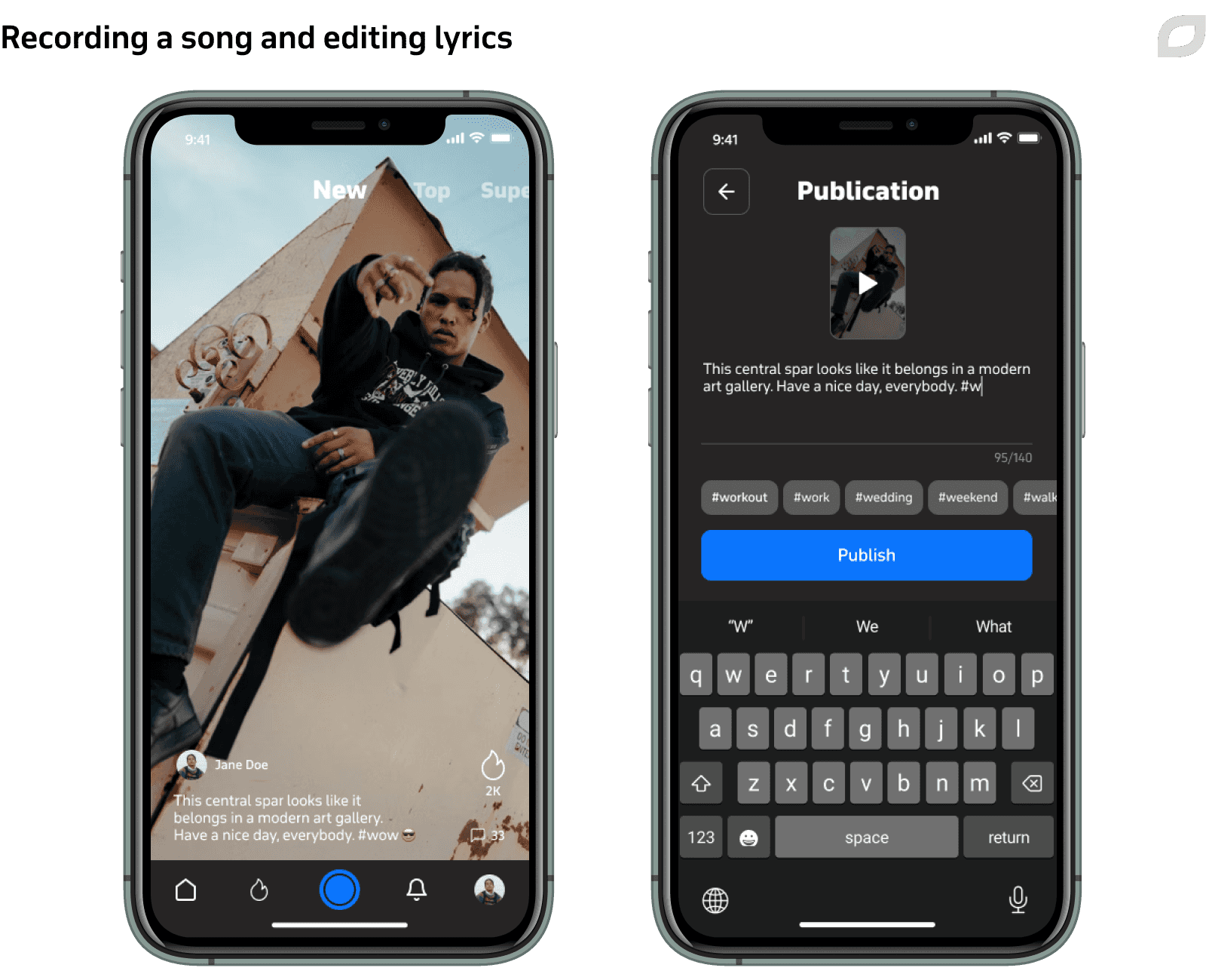

Other functionality delivered during this phase includes:

- Recording tracks using beats from the catalog and optionally applying audio effects

- Publishing tracks

- Viewing and listening to the tracks published in the application, including ones in the main feed, other users’ profiles, and on the beat screen

- Reacting to any published music video on any feed

- Creating and editing text

- Sharing any published music video in the application as a file without a watermark or downloading the files and sharing them with other users

Private beta testing

Before moving to Android app development, the customer performed beta-testing for iOS users via TestFlight through the Apple Store. They invited 100 music artists and content consumers to test the solution.

We used Google Analytics to collect the metrics, tracking average session time, user engagement and paths, and other data. Based on the gathered information, the product owner made decisions about fine-tuning the app concept to improve the UX and extend the active session time. For instance, the customer tweaked the challenge functionality and managed to increase active session time three times.

Android app development

We used the Superpowered library for the Android app development as well as for the iOS one. We also used the Oboe library to enable low latency for Android devices. It helped reduce the sound processing time required by the operating system and capture the audio from user's microphone right away.

To deliver the video-editing functionality, Itransition’s team investigated media codecs. We singled out VP8, VP9, H.264, and H.265 codecs and compared them by coding/decoding time, processor and memory resources spent on coding/decoding, compression percentage, and video quality. In the end, we opted for H.264 as it met all our demands.

For a better user experience, videos should be instantly played as the app feed is scrolled, but some users can have low internet bandwidth. To mitigate this potential setback, we implemented dynamic codec selection on devices for recording a video and utilized a third-party CDN, which delivers video files by transcoding them on the fly and bringing them to a unified format according to user bandwidth configurations.

We defined the parameters to be HD video, bitrate 2,3-3,5 Mbit/sec, 60 fps, and codec H.264. For full HD resolution with the H.264 codec, Google allows using up to six decoders, meaning that six video streams can be decoded simultaneously.

When users scroll through the app content, they see at least three videos: the main video in the screen center, the previous video, and the next one. Each video stream requires decoder reinitialization, which takes time and hinders smooth video demonstration. Additionally, the customer wanted the solution to have a two-dimensional feed with scrolling up and down or left and right. For preloading, we used the cache system of ExoPlayer employing decoders to ensure smooth media loading.

An additional issue lay in CDN usage. The video editor must get the already downloaded cache to start smoothly, but the video content can be of poor quality due to low bandwidth. We replaced the initial cache files with better-quality ones while the user is in the process of editing the video.

While iOS has advanced tools like the AVFoundation framework for decoding videos, Android does not have such instruments. So, we applied a brand-new Google repository for Chrome OS that provided an approach for basic video composition/editing operations on Android and Chrome OS. It demonstrated the overlay of one video on another and their moving during the playback, with each of them having different resolutions. This process is based on ExoPlayer and does not require manual decoding.

The repository also enables optional video effects like rotation, transparency, scaling, color filters, speeding up, slowing down, and combining them in real time. We applied the repository's main idea to the customer’s video-editing functionality. Our team also made changes in the scene model, while the editor automatically implemented the changes for all new frames together with displaying tracking frames. Unlike Google, our team used the same code for previewing and creating output files and applied Swappy, the Android frame pacing library, to achieve smooth rendering and correct frame pacing.

At that point, we still needed to deliver video effects functionality. We utilized the Android SDK for basic video editing like combining files, resizing, repositioning, rotating, and overlaying images/videos/GIFs on videos. To launch OpenGL shaders that change images according to the overlay graph, our team used the MediaPipe library.

Social network functionality

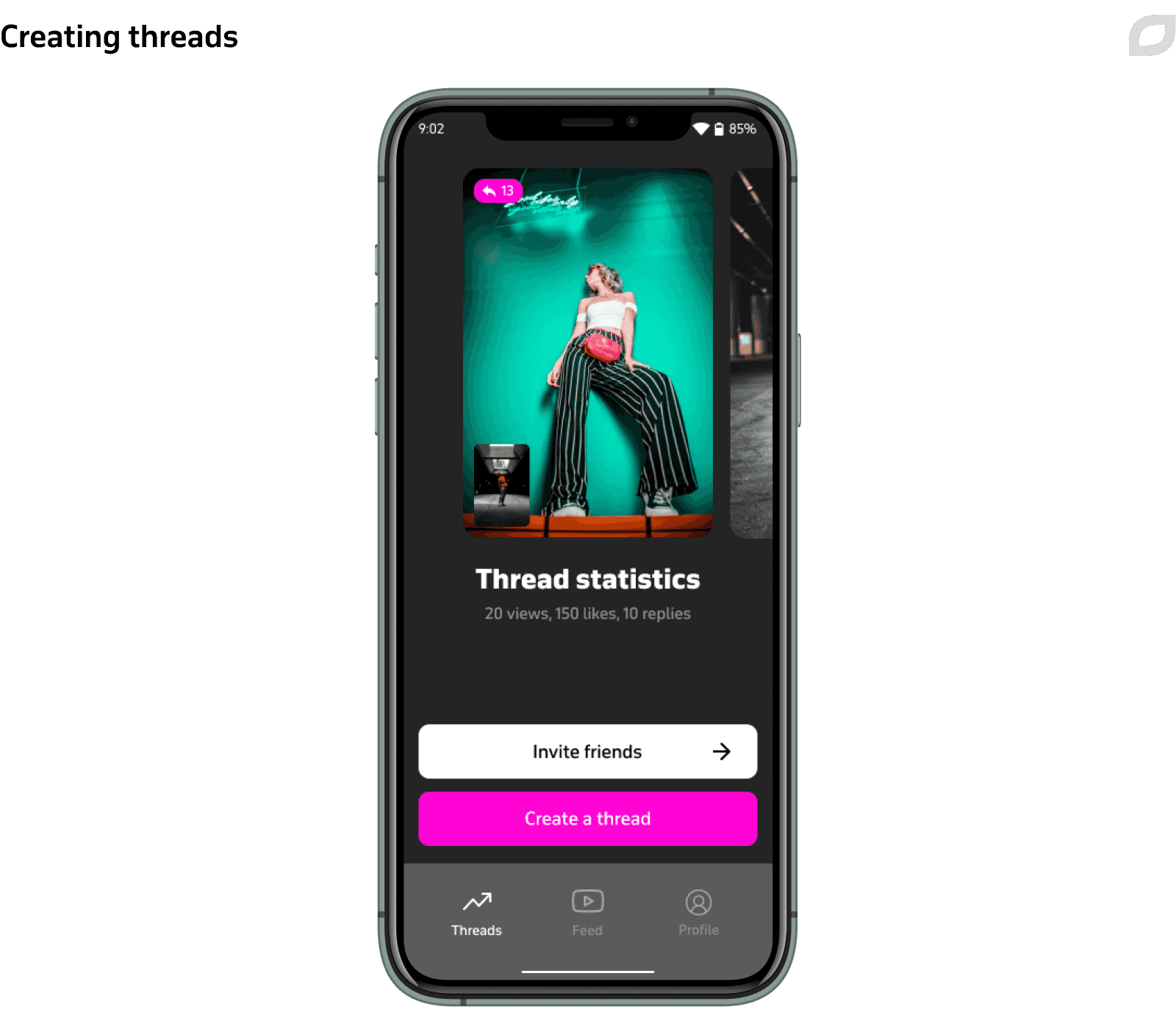

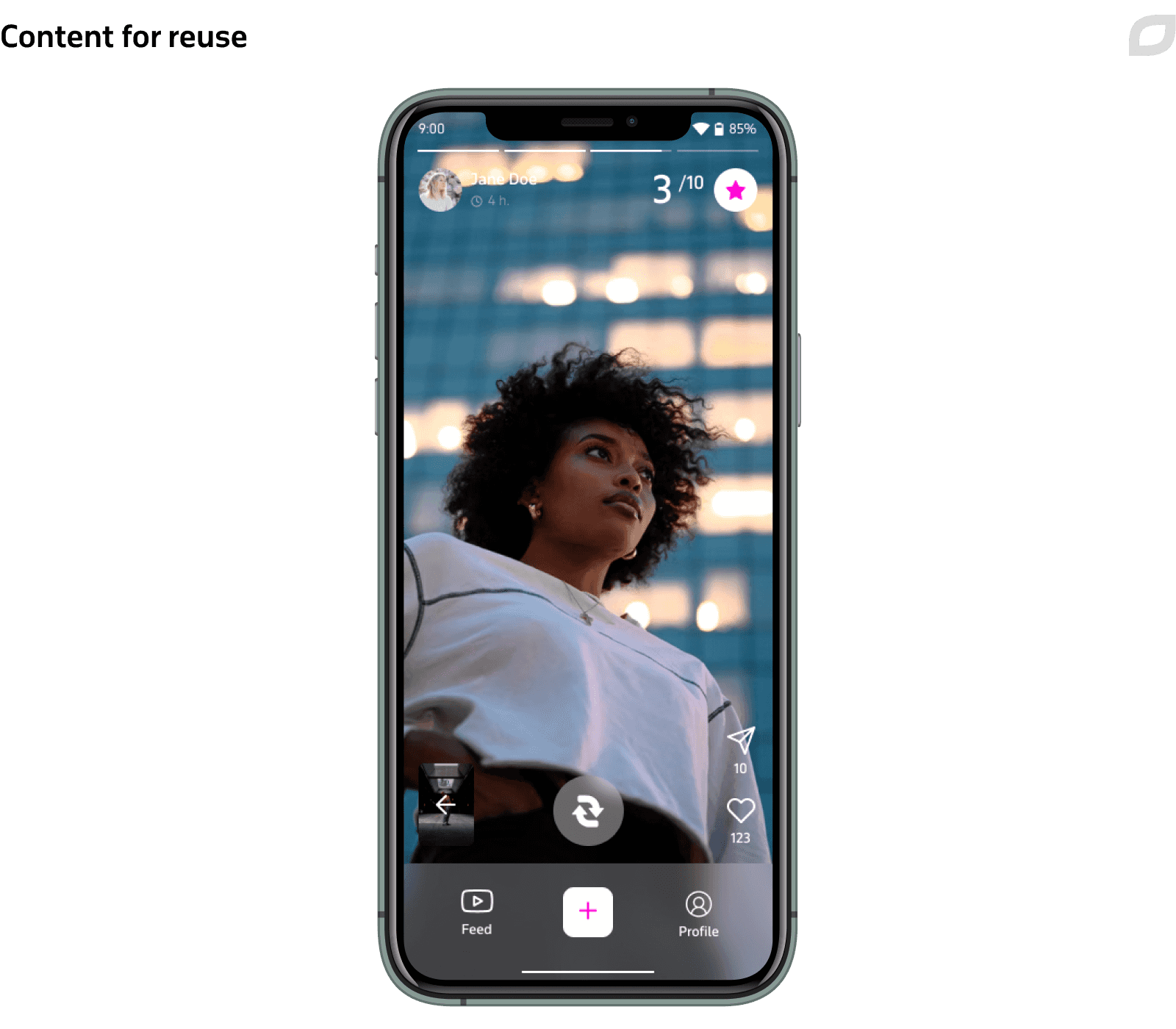

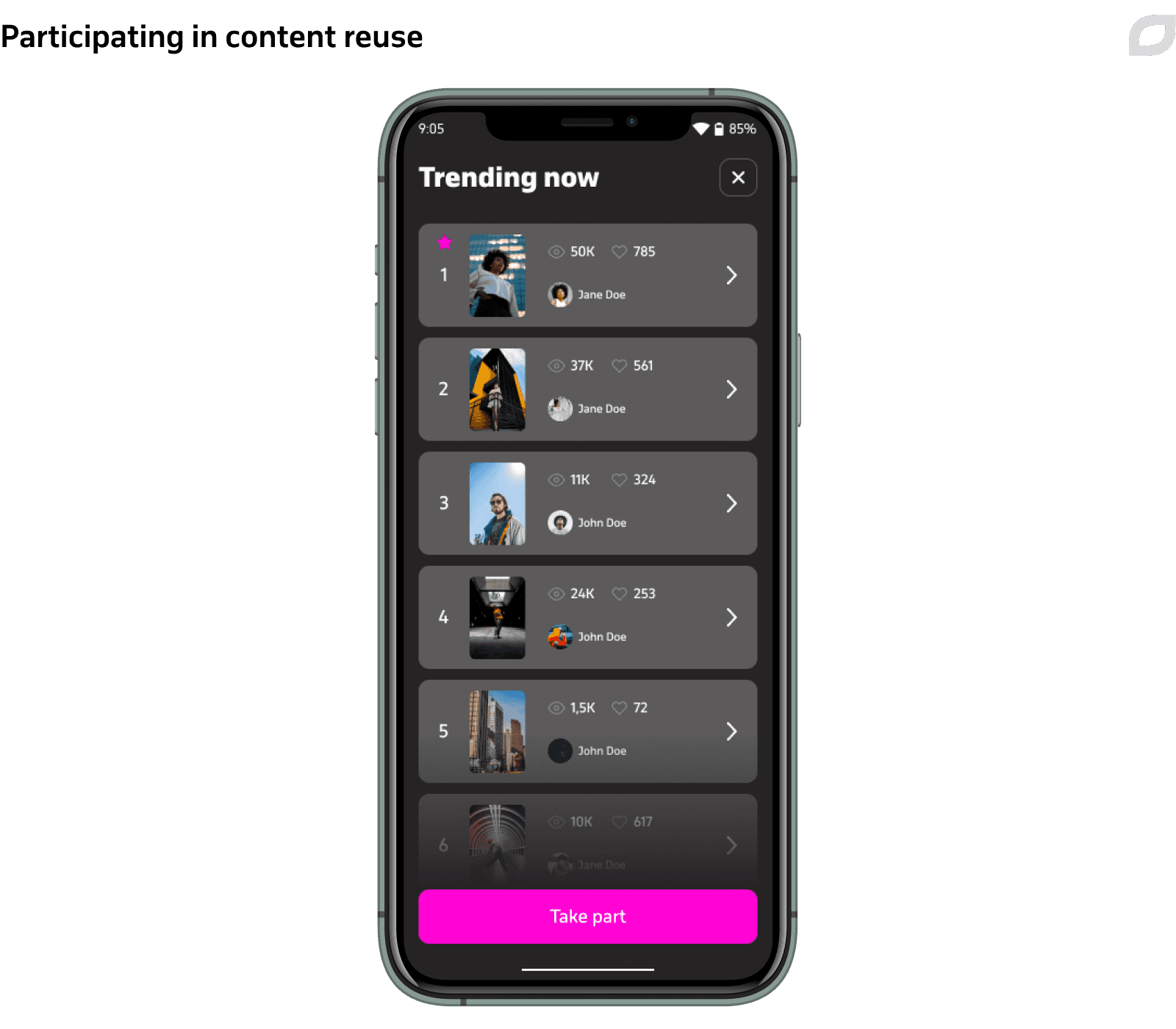

Next, we developed social network functionality to enable app users to:

- Like, comment on, and share content

- Promote their content

- Reuse the content created by other users

- Participate in challenges

- Monetize the created content

Technologies

|

Java and Spring The apps’ backends |

Firebase Push notifications and app crash alerts |

GitLab Continuous integration |

|

Firebase App Distribution Continuous delivery |

PostgreSQL Relational database |

Tarantool, Redis, and Hazelcast In-memory database |

The generated audio and video content as well as authorization and social media data is stored on the backend via REST API.

Process

The project was carried out by a mixed development team. The customer’s team was responsible for the solution’s concept, requested feature development, and made decisions on alterations, while our team selected technical solutions and was responsible for technical aspects.

|

Itransition |

Customer |

|---|---|

|

|

Because the customer is a quickly developing startup, we had to work at a fast pace and navigate changing requirements and new ideas. This is why we practiced Scrum with one-week sprints and introduced plannings, preplanings/groomings, and retros.

Due to the fast pace of work, the customer at first hesitated to establish Continuous Integration and use a statistical code analyzer for fear it would slow down the development. However, after the PoC delivery, we introduced the CI and statistical code analyzer in line with the best development practices

Results

Itransition’s team delivered iOS and Android mobile apps for generating and distributing user audio and video content. With our assistance, the customer tested the solution’s viability, delivered a proof of concept, and developed the applications. As a result, we achieved the following:

- Proved the solution’s viability with the PoC delivered in six weeks

- Performed technology research for the best tools to deliver the apps

- Developed the iOS and Android mobile apps that got Series B funding

- Synchronized the work of a mixed team and set up working processes, including plannings, retrospectives, estimates, and continuous delivery

Case study

A cloud-based solution for collections management

Read how Itransition developed an AWS-based cloud version of a popular collection management solution for museums.

Case study

Dedicated team for a music distribution company

Learn how Itransition set up a dedicated development team to modernize Ditto Music’s content distribution and artists management platform.

Case study

Benchmark dashboards for ad campaign optimization

Find out how Itransition developed a set of analytics optimization solutions for monitoring ad campaigns and accurately forecasting their results.

Case study

Video editing platform modernization

Learn how Itransition brought a legacy video editing platform up to date by modifying its architecture for an Australian startup.

Case study

A shoppable video platform for AiBUY

Find out how Itransition’s dedicated team helped AiBUY release their innovative machine learning-driven shoppable video platform.

Case study

An AR app for interacting with celebrities

Learn how Itransition helped to develop an AR app for interacting with celebrities for iOS and Android.

Case study

Corporate cross-platform mobile app

Learn how we developed a Flutter app featuring corporate discounts and event registration for internal use, ensuring high user satisfaction and solid security.

Case study

Custom conference management software for an educational society

Learn how Itransition delivered a suite of custom conference management software and enriched it with bespoke modules automating event organization.