OCR algorithms: types, operation & best solutions

May 28, 2024

- Home

- Computer vision

- OCR algorithms

by Nikolay Konovalchuk,

Senior ML Engineer

Optical Character Recognition (OCR) algorithms identify typed or handwritten text in scanned documents and scene photos and convert it into a machine-readable text format. Combined with optical scanners, they enable OCR software to turn on-paper documents into digital files for easier processing.

Learn about types of OCR algorithms, their operation, and use cases, and find out which open-source and commercial OCR tools would be a good fit for your computer vision solution.

Main OCR algorithms

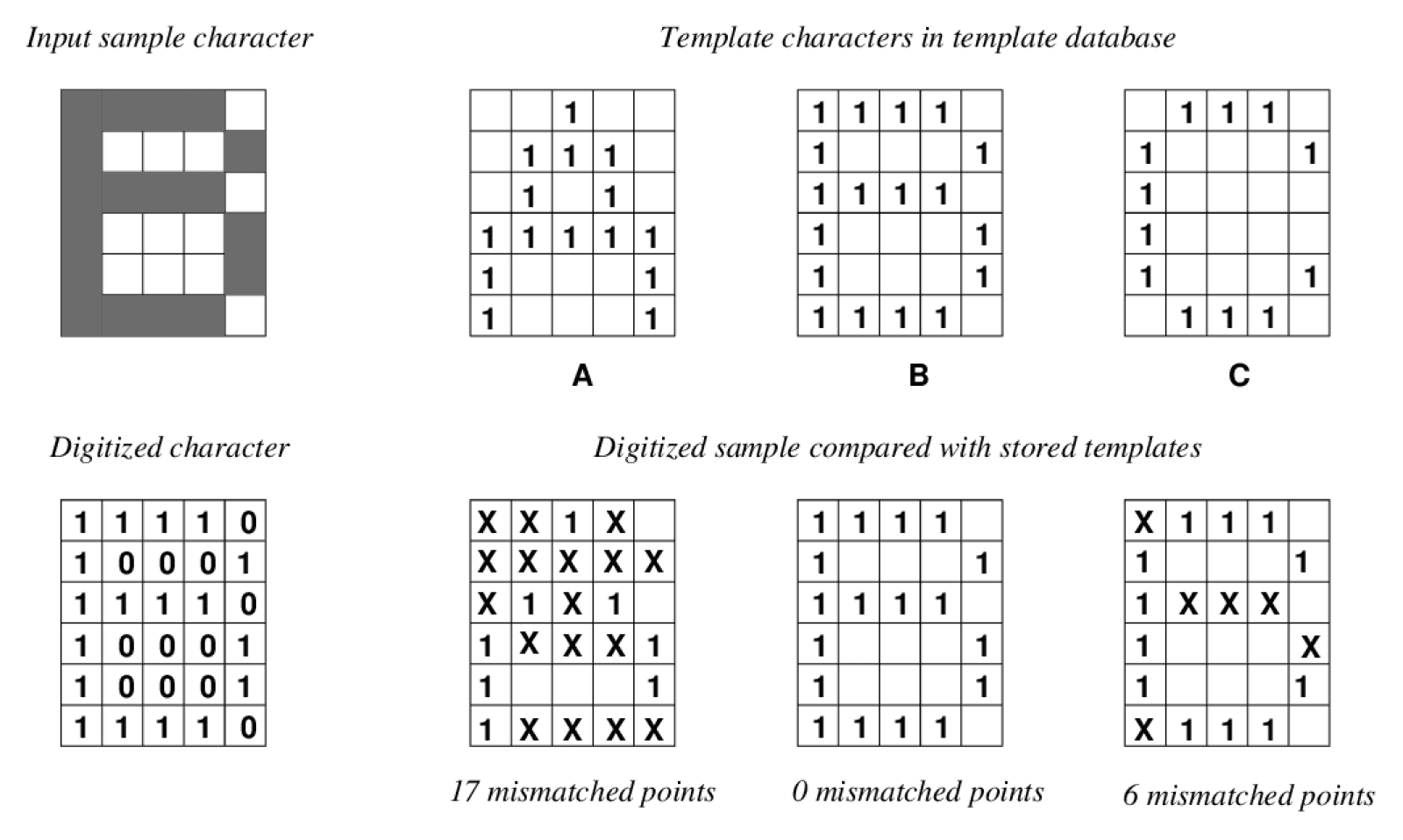

Pattern matching

Algorithms for pattern matching or pattern recognition isolate a character called a “glyph” from the rest of an image and compare it with other glyphs stored as templates on a pixel-by-pixel basis. Since this comparison is based on a predefined set of rules and works properly only between glyphs of similar scale and font, it’s typically used to analyze scanned images with text typed in a known font.

Image title: Template matching of digitized characters

Image source: semanticscholar.org — An Implementation of OCR System Based on Skeleton Matching

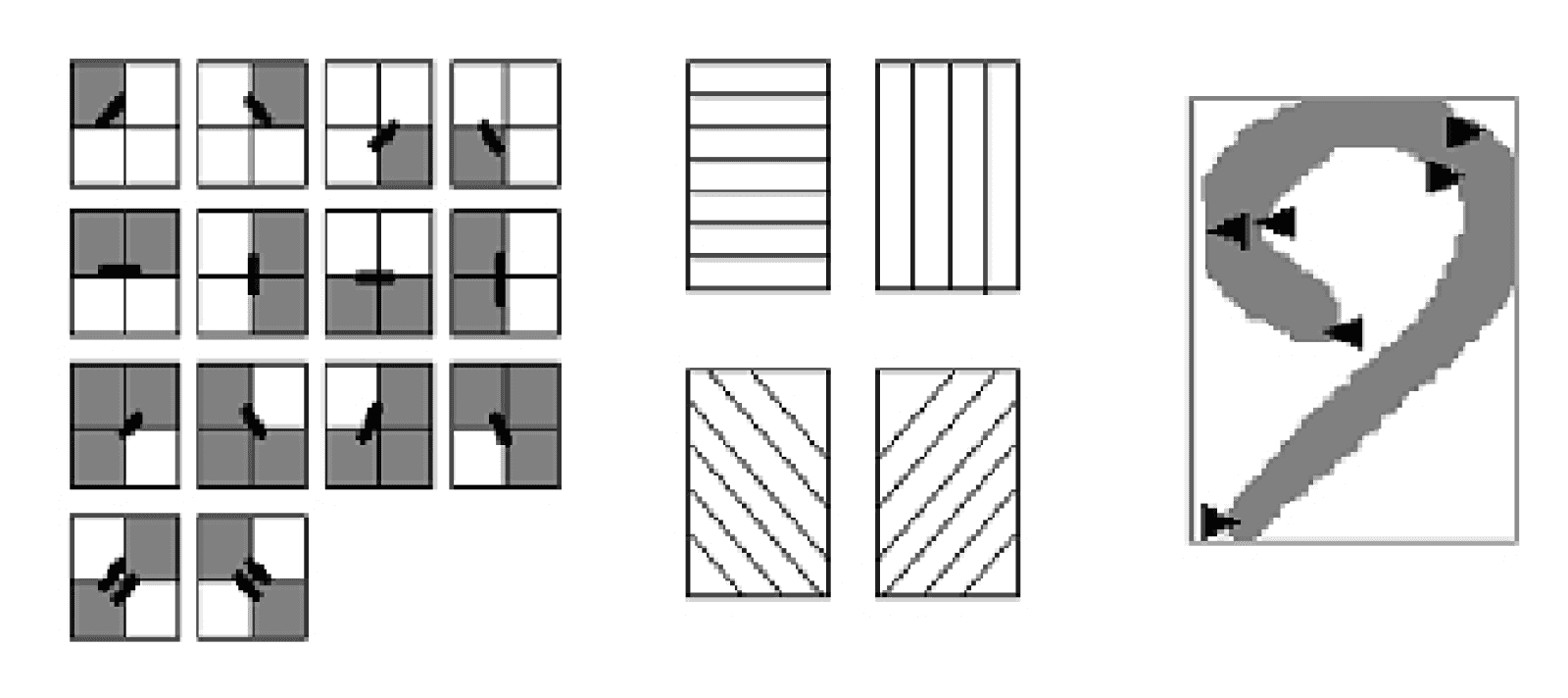

Image title: Contour direction and bending features

Image source: semanticscholar.org — An Overview of Feature Extraction Techniques in OCR for Indian Scripts Focused on Offline Handwriting

Feature extraction

Feature extraction algorithms break down glyphs into more basic features like angled lines, intersections, or curves to make recognition computationally efficient. After feature detection, they compare such attributes with previously stored glyphs to find the best match. This approach, which typically relies on machine learning (ML) algorithms like k-nearest neighbors, enables the identification of both printed and more complex handwritten text.

OCR software categories

Simple optical character & word recognition software

This type of OCR software compares captured text images to predefined templates representing specific text image patterns. They can compare texts character by character or word by word. Due to the sheer range of handwriting styles, which would require unlimited templates stored in their databases, these systems can only process typewritten text.

Intelligent character & word recognition software

Rather than relying on predefined text archetypes for comparison, intelligent OCR software leverages AI, specifically neural networks. These models can be trained using large sets of data to then recognize text from images without relying on manually invented heuristics.

How does OCR work?

Traditional machine learning-based OCR

Compared to their more advanced deep learning counterparts, ML-based OCR systems are relatively easier to develop and require less training data and computing power.

1

Image acquisition

1

Image acquisition

The OCR solution uses an optical scanner to acquire non-editable text content from documents of all types (flatbed scans of corporate archival material, scene text image captured by an outdoor camera, etc.) and turns it into machine-readable binary data. Binarization can be performed, for instance, by assigning “1” or “0” to black and white pixels, respectively.

2

Preprocessing

2

Preprocessing

The OCR software cleans up the source imagery at an aggregate level so that the text is easier to discern and noise is reduced or eliminated. This task can be performed through various techniques, including deskewing, layout analysis, and character segmentation.

3

Text recognition

3

Text recognition

The system scans the image content to identify groups of pixels that are likely to constitute single characters and assigns them to a class. Depending on the selected approach (pattern matching or feature extraction), the solution then compares glyphs to generalized OCR templates or prior models or uses ML algorithms to derive features for the recurring groups of pixels.

4

Post-processing

4

Post-processing

After processing, the OCR system converts the extracted text data into a simple file of characters or, in the case of more advanced solutions, into an annotated PDF file that preserves the original page layout. Modern OCR software can generate highly accurate output, but users can improve OCR accuracy even further, for instance by fine-tuning algorithm output via subsequent training sessions with new textual data.

Deep learning-based OCR

OCR systems leveraging deep neural networks are typically more accurate than traditional ML-based solutions.

Preprocessing

This phase differs from the respective step in the ML pipeline as it uses other preprocessing techniques, including image resizing and pixel value normalization.

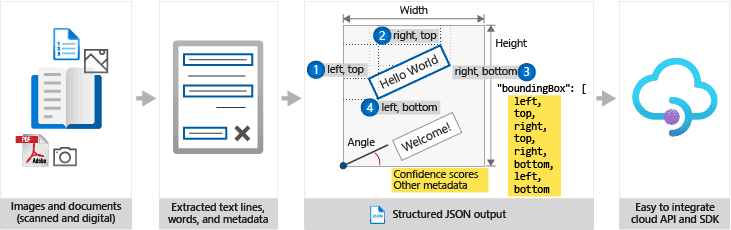

Region proposal

A region proposal model detects individual characters or words depending on its architecture and encloses them into bounding boxes that define regions of interest. If the model is built to detect characters, their regions are joined into word regions with one more processing step.

Text recognition

Regions are cropped and processed as individual images by a recognition model to get a single word per region.

OCR use cases by industry

Retail

Customer data entry and processing of purchase orders, invoices, and packing lists for faster inventory management and shelf life tracking.

Healthcare

Digitization of patient records (treatments, tests, insurance payments, etc.) and assistive technology for visually impaired users.

Finance, banking & insurance

Automated processing of invoices, bank statements, loan applications, receipts, or insurance claims.

Transportation & logistics

Automated number plate recognition for traffic law enforcement, traffic signs recognition for ADAS, document verification in airports, and data entry from bills of lading and other documents.

Manufacturing

Scanning of waybills, invoices, bills of materials, or package labels for better supply chain visibility and warehouse management.

Partner with Itransition to adopt OCR

Business benefits of OCR adoption

With OCR, you can automate time-consuming tasks like data collection and document processing to achieve digitalization and maximize operational efficiency.

Faster data entry

OCR systems automatically scan hand-filled forms or printed documents and convert them to digital format. This reduces manual data entry and considerably speeds up the process.

Increased data accuracy

Manual data entry is tedious and therefore prone to human errors. OCR solutions identify data directly from scanned documents and perform the task with greater (although not absolute) accuracy.

Ease of storage

Once digitized, documents take up mere bites on a server. OCR digitization also facilitates backup, since keeping digital copies in additional databases is certainly less challenging than storing paper duplicates in a separate physical location.

Improved customer satisfaction

OCR streamlines customer interactions, enabling clients to scan and send personal documents or compiled forms remotely, without visiting in person.

Popular open-source OCR solutions

Organizations that want to avoid paying hefty licensing fees for OCR solutions can count on a wide range of FOSS (free and open-source software) engines providing built-in OCR algorithms and pre-trained models.

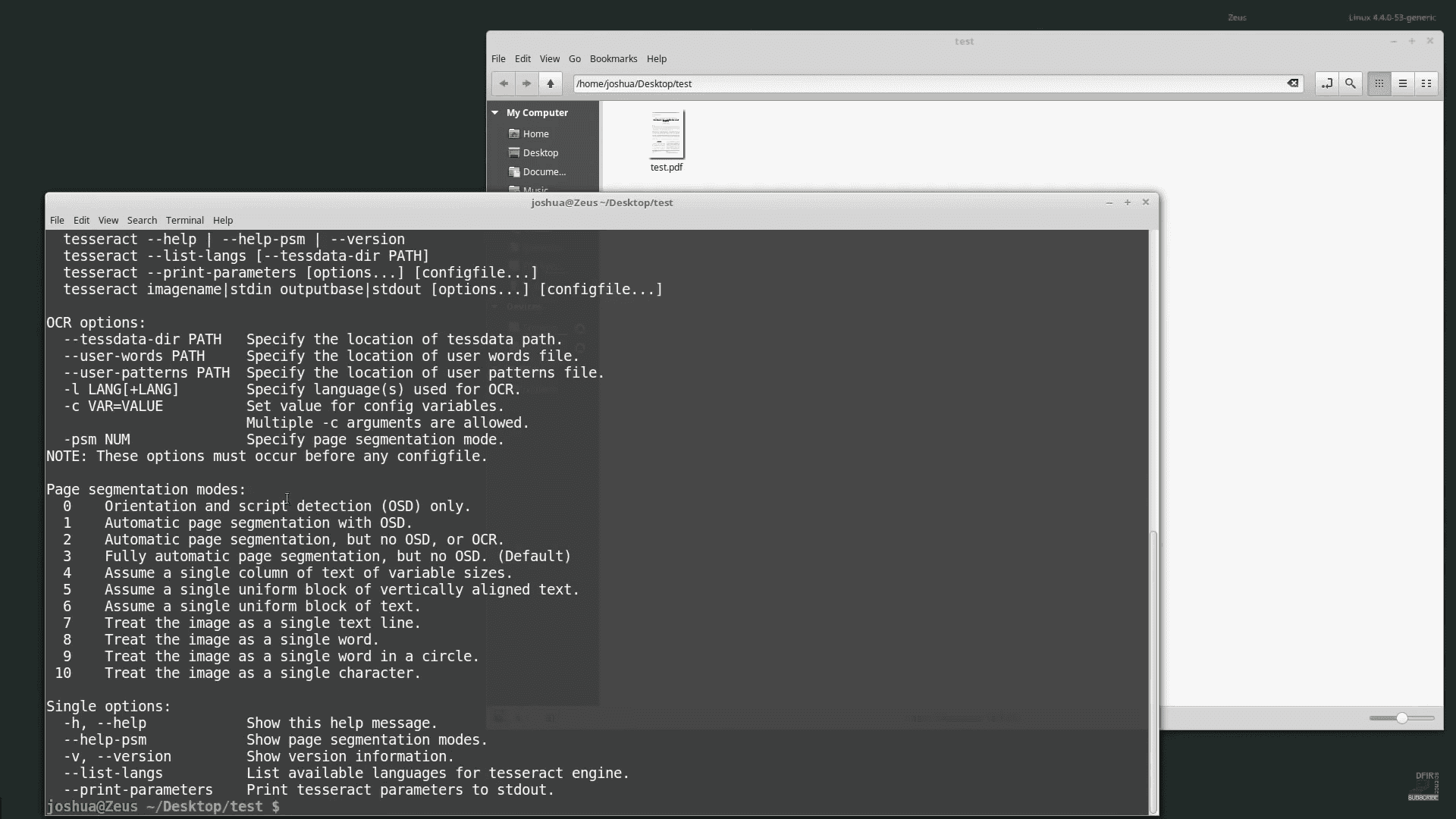

Tesseract

The Tesseract OCR engine is an open-source algorithm whose development has been sponsored by Google since 2006. Considered one of the most accurate OCR frameworks, Tesseract is widely lauded in the FOSS community for its capabilities.

Image title: Tesseract’s CLI interface

Image source: youtube.com — Using Tesseract — OCR to extract text from images

- The core OCR engine is available as a CLI offering on Windows and Linux, though it has less extensive support on the Mac platform.

- Tesseract supports 116 languages by default, though you can train the engine with custom data sets to recognize other languages.

- Starting from version 4, Tesseract is based on a long short-term memory (LSTM) recurrent neural network (RNN) architecture and features automatic language recognition.

- Version 5 of Tesseract modernized the codebase and brought a significant performance improvement.

- Various APIs available for specific programming languages.

- A long-time caveat for Tesseract is that character images may need substantial cleaning before training.

- A wide range of FOSS and proprietary interfaces and GUIs have emerged to make use of this framework, including gImageReader (a Gtk/Qt front-end), YAGF (a graphical front-end that also accommodates Cuneiform), and OCRFeeder (a document layout analysis system).

EasyOCR

EasyOCR is a well-maintained repository supporting more than 80 languages and all popular script types, including Latin, Cyrillic, Chinese, and Arabic. It has its own Python package abstracting all the complexities and allowing for easy integration.

PaddleOCR

Developed by Chinese tech enterprise Baidu, PaddleOCR is an OCR model based on the PaddlePaddle deep learning framework. It combines high recognition accuracy with good computational efficiency and supports over 80 languages.

Kraken

There have been a few refugees from the splintered OCRopus project, and Kraken, a CUDA-supported turnkey OCR framework that runs on Linux and OSX and requires external libraries to run is one of them. It can be installed via PIP or Anaconda and must load recognition models from external sources. The project also features a public repository of model files.

Calamari OCR

Python 3-based Calamari OCR is a framework derived from Kraken. It offers a model repository with an accent on historical rather than contemporary textual sources, and where French is the primary alternative language to English.

Top commercial OCR services

Companies requiring more comprehensive OCR services and capabilities can opt for proprietary systems offered by major cloud providers. These SaaS solutions typically include off-the-shelf OCR models and algorithms, visual information ingestion tools, and OCR APIs to connect such services to your applications.

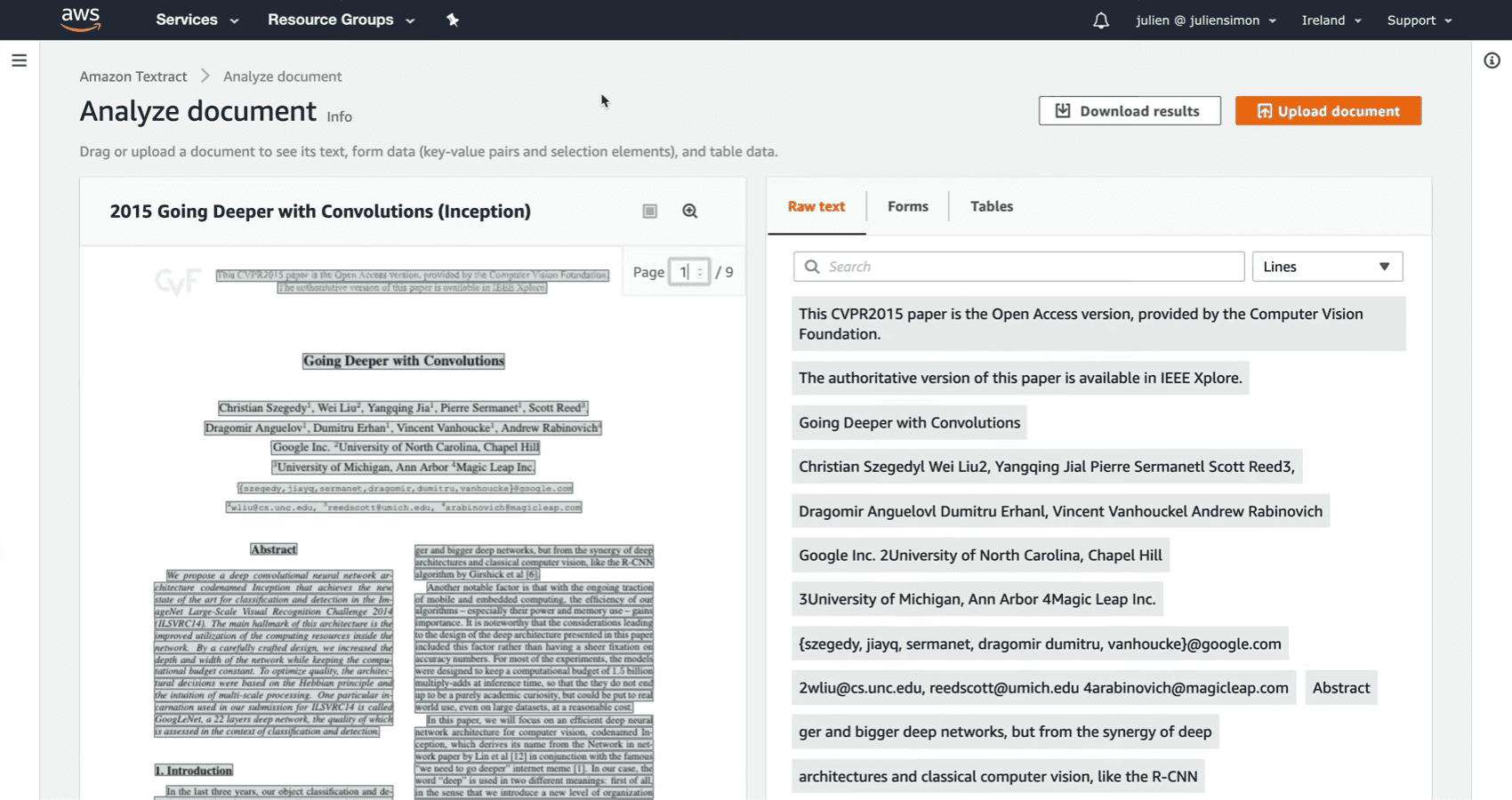

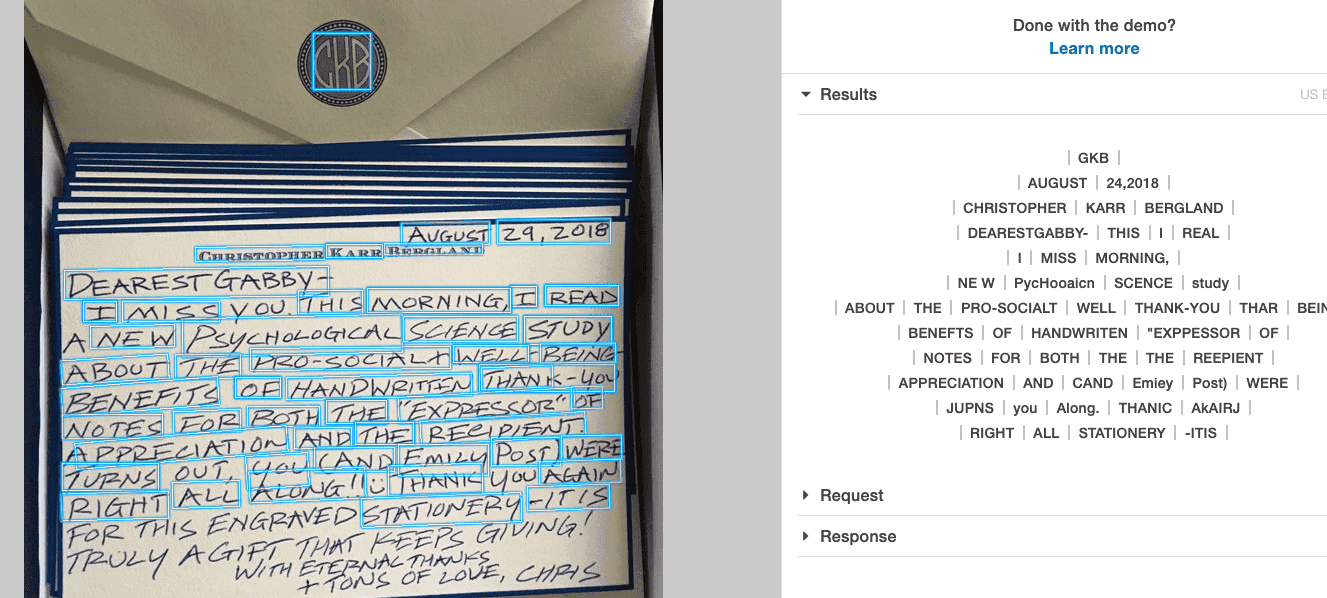

Amazon Textract/Rekognition

Amazon offers two distinct OCR services: Amazon Rekognition for individuating small text amounts in the wild and Amazon Textract for a traditional document-based OCR pipeline. Furthermore, Textract itself includes five different APIs:

- Detect Document Text API to extract printed text and handwriting from a document

- Analyze Document API to extract text from forms, tables, and signatures or peer into a document to find the specific information you require

- Analyze Expense API to extract information from invoices and other accounting documents

- Analyze ID API to extract personal data from passports, driver’s licenses, and other IDs

- Analyze Lending API to classify and extract data from mortgage-related application documents

Pricing

Rekognition includes Image, Video, Custom Labels, and Custom Moderation services, each with their own pricing details. It also includes a 12-month free tier period which enables customers to analyze a limited amount of content per month. Textract’s pricing follows a similar pricing principle with the free tier lasting three months. The company has a detailed price list on its website, along with a comprehensive online calculator to help estimate potential costs.

Image title: Amazon Textract in action

Image source: youtube.com — Amazon Textract — Extracting text, tables and forms from documents

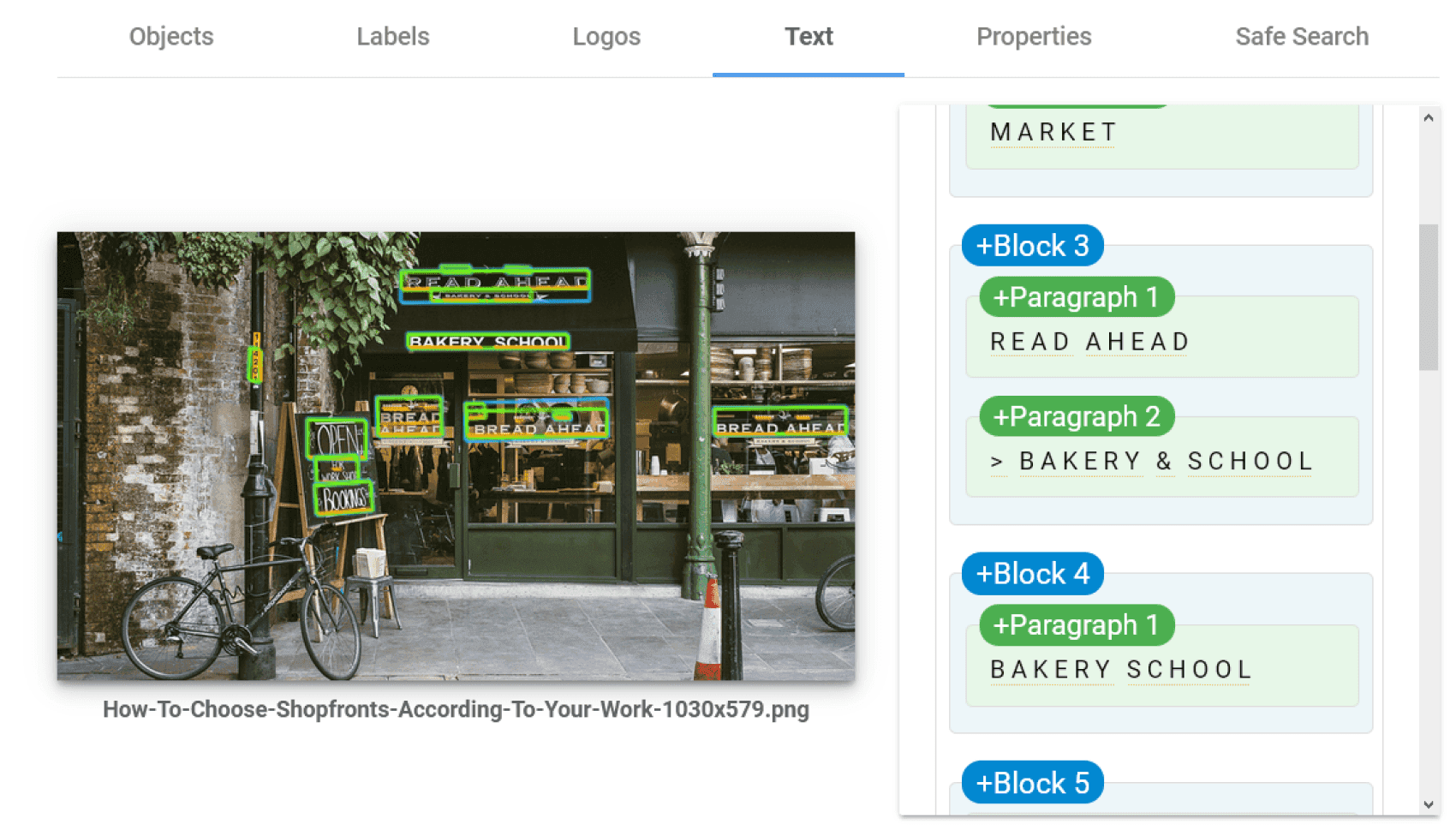

Google Cloud Vision

Google offers two types of text detection in the form of API calls: Text Detection and Document Text Detection. The first is aimed at sparse amounts of text in images (such as images of signs for AR/VR or navigation products), while the second has a more traditional document OCR functionality.

Vision also includes Vertex, a development environment to easily build and manage computer vision applications. The solution provides developers with a built-in pipeline for real-time data stream ingestion, pre-trained ML models, and warehousing capabilities. Vertex now incorporates the service formerly known as AutoML Vision, a proprietary model-training framework to create your own ML models for OCR and other computer vision tasks.

Pricing

The first 1000 units per feature (Text Detection, Document Text Detection, etc.) used each month are free. Then, you’ll pay $1.50 per 1,000 units per month. The price will decrease to $0.60 per 1,000 units per month after 5,000,000 units.

Image title: Google Cloud Vision

Image source: cloud.google.com — Cloud Vision API

Microsoft Azure AI Vision

Microsoft's optical character recognition services are just one aspect of Azure AI Vision, which also includes image analysis, spatial analysis, and facial recognition. As for pure text recognition, you can find the related features in the Vision Studio toolset.

Azure AI Vision’s OCR engine, namely Read, is powered by multiple ML models supporting global languages and is available both as a cloud service and an on-premises container. It provides two OCR functionalities and respective APIs: recognition of general, in-the-wild images, such as street signs or posters, and analysis of text-heavy scanned and digital content for easier document processing.

Pricing

Microsoft provides OCR not as a stand-alone feature but in combination with other tools for the detection of celebrities, landmarks, brands, and generic objects. The price starts from $1 per 1,000 transactions for the first million units and decreases with higher volumes.

Image title: Microsoft's Read Vision API workflow

Image source: docs.microsoft.com — What is Optical character recognition?

Other commercial OCR tools

There is also a broader range of mid-level commercial OCR solutions available, including:

- Cloudmersive Optical Character Recognition API

OCR is one of Cloudmersive's range of APIs with support for 90 languages and automatic segmentation and preprocessing. A complex hierarchy of pricing ranges from 'Small Business' to 'Government'. - Free OCR API

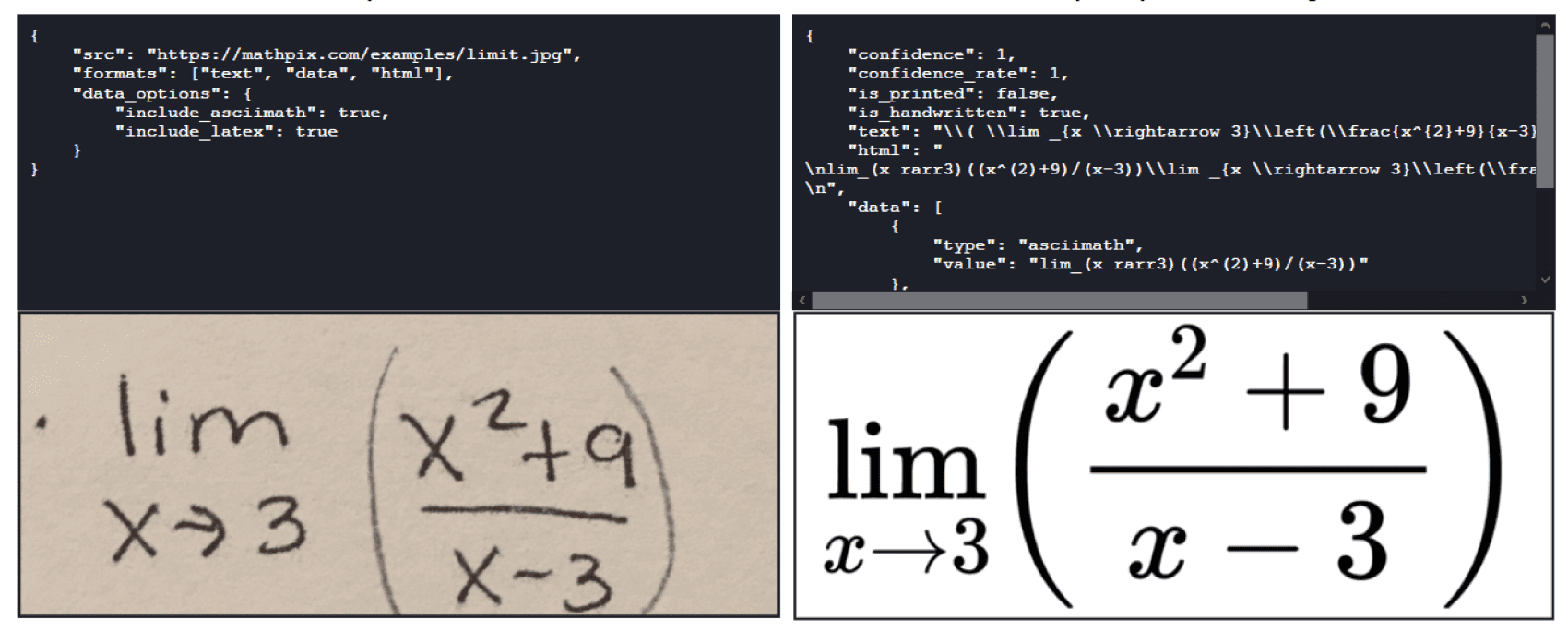

Free OCR API provides Pro PDF and Enterprise tiers in its OCR offering currently set at $60 and $299+ p/m, respectively. They boost the permitted page length from a fairly useless (watermarked) three pages to 999+ pages. - Mathpix API

Mathpix OCR offers an API aimed at STEM companies that supports the extraction of mathematical formulae and their translation into a proprietary markdown format (helpful to add formatting elements like headings or URLs to plain text without using a text editor). The platform offers two free plans for generic users and students/educators respectively, along with a Pro plan ($4.99 per month).

Image title: Mathpix OCR

Image source: mathpix.com — OCR API for STEM

Large language models with vision capabilities

VisionLLMs embody the concept of multimodal AI, namely a combination of computer vision and natural language processing. These models can ingest and capture information from multiple types of input, including images and respective textual descriptions, ensuring superior context understanding. They also enable users to perform more complex and interactive tasks than "basic" OCR, such as asking the system to extract textual data from a picture through written prompts. Here are some major examples of VisionLLMs:

GPT-4 Turbo with Vision

OpenAI’s large multimodal model combines textual and visual understanding and can execute tasks like handwriting OCR, image classification, and visual question answering. However, the extraction of sensitive data is limited for privacy protection. The model can also be used in Microsoft Azure. OpenAI provides a pricing calculator for this model on its website.

Gemini 1.5

Google DeepMind’s multimodal AI includes the model itself and the chatbot interface (formerly Bard) based on it. Gemini can interpret different types of information (textual, visual, etc.) and perform OCR on natural images, document understanding, and many other tasks. Google offers both a free version in AI Studio and a pay-as-you-go option.

Claude 3

Anthropic's Claude 3 features comprehensive capabilities covering, for instance, optical character recognition, text and code generation, and multilingual translation. The model is also available on Amazon Bedrock and Google Cloud Vertex AI. Users can access three Claude 3 models with different capabilities and prices.

OCR tool selection guidelines

Open-source vs commercial OCR solutions

Open-source

Commercial

Pros

Pros

By their nature, FOSS OCR tools are easily accessible, which makes them ideal for businesses with limited budgets. These engines can be customized by any user with the necessary expertise to fit specific requirements. Despite their non-commercial purpose, many open-source OCR tools receive regular fixes and updates from active communities of contributors or major IT enterprises.

Proprietary OCR solutions usually outperform most FOSS tools as they rely on state-of-the-art technology developed thanks to Big Tech’s regular investments. Commercial OCR offerings typically include intuitive automation pipelines, ongoing updates, and dedicated user support to maximize ease of adoption and ensure a smooth customer experience. SaaS solutions already implement many FOSS packages and recognition models into a functional OCR pipeline (data management, processing, etc.), so adopters don’t have to implement them.

Cons

Cons

Compared to commercial OCR services, the implementation can be more challenging and generally requires greater efforts from your internal IT team or outsourced experts. Community-driven support (forums, documentation, etc.) cannot compete with proprietary platforms’ maintenance and technical assistance.

Licensing fees scaling up with usage needs, combined with uncertainty around future pricing policies, can discourage adopters. Customers need to commit to hybrid or cloud-based OCR framework models or accept that connecting on-premises models to cloud-based commercial APIs will create some data security risks.

Navigating SaaS OCR’s ever-changing offering

Concerns

OCR API market leaders not only offer different products for different types of OCR scenarios, but these products also vary in terms of architecture, features, available dataset templates to organize data, software modules, and processing pipeline capabilities.

Major OCR providers update their service offerings frequently, which makes comparisons that stay accurate in the long-term a challenge.

Periodically, new tests to compare SaaS OCR services in terms of text prediction error count, accuracy rates, and other metrics are created. However, these sporadic surveys rarely include a wide enough range of SaaS offerings, focusing instead just on the largest providers.

Recommendations

Since customer use cases and data will differ from one another, and SaaS OCR test rankings are constantly in flux, you should take advantage of initial free credits and trial periods.

Develop a modular OCR framework that can switch relatively easily between APIs to accommodate an exploratory phase for the project.

Image title: Amazon Rekognition

Image source: amplenote.com

Image title: Google Cloud Vision OCR

Image source: amplenote.com

Our computer vision services

Itransition offers a full range of consulting and development services to help organizations build and adopt computer vision solutions, including OCR software, fully tailored to their business requirements.

Consulting

We offer expert guidance to streamline your computer vision adoption project and overcome potential implementation roadblocks.

- Use case identification

- Existing solution assessment (if any)

- Data mapping and quality audit

- Tech stack selection

- Software architecture design

- Project planning and budgeting

- Development process review

- User training and support

Development

We create computer vision solutions powered by AI algorithms and trained on large, high-quality data sets to achieve optimal performance.

- ETL pipeline configuration

- Data pre-processing (cleansing, annotation, and transformation)

- Algorithm selection

- AI model training

- API creation and software integration

- End-to-end testing

- Post-launch model fine-tuning and on-demand software modernization

Automate your corporate workflows with Itransition’s OCR solutions

OCR as a digitization catalyst

Driven by the urgent need to shift towards a digital business model, many organizations have found optical character recognition to be a precious ally. OCR systems can convert bulky documents and other paper-based resources into easily manageable files, turning "paperwork" into something that requires no "paper" and much less "work". Although OCR is considered complicated and expensive to implement, companies can streamline its adoption by turning to open-source OCR systems or SaaS solutions. To select the most suitable engine or build OCR software from scratch, consider relying on an expert partner like Itransition.

Insights

Computer vision in manufacturing: 9 use cases, examples, and best practices

Learn how to leverage computer vision in manufacturing and explore its use cases, adoption challenges, and implementation guidelines.

Case study

BI platform with AI and computer vision for a fashion retailer

Learn how Itransition delivered retail BI and deployed an ML-based customer analytics solution now processing 10TB of data.

Service

Artificial intelligence services & solutions

Itransition offers full-cycle artificial intelligence services to help companies build and scale powerful AI solutions tailored to their business needs.

Insights

Computer vision in retail: use cases, examples & implementation guidelines

Learn about key applications, real-world examples, and payoffs of computer vision in retail, along with best practices to streamline its implementation.

Case study

An ML solution for brand analytics and reporting

Find out how Itransition’s team designed and developed an ML tool for brand tracking and analytics that processes images 50% faster than the legacy solution.